This post is my study notes of Andrew Ng’s course. https://www.andrewng.org/courses/

Here we use ![]() , this gives us

, this gives us ![]() input layer units (not counting the extra bias unit). The training data will be loaded into the variables

input layer units (not counting the extra bias unit). The training data will be loaded into the variables ![]() and

and ![]() , where

, where ![]() is the image, and

is the image, and ![]() is the label.

is the label.

Let ![]() be the number of inputs(images in our case), and

be the number of inputs(images in our case), and ![]() be the number of possible lables. The cost function for the neural network (without regularization) is

be the number of possible lables. The cost function for the neural network (without regularization) is

![Rendered by QuickLaTeX.com \[\begin{aligned}J(\theta) = \frac{1}{m} \sum_{i=1}^{m} \sum_{k=1}^K &[-y_k^{(i)}\log(h_{\theta}(x^{(i)})k) \\ &- (1-y_k^{(i)}) \log(1-(h{\theta}(x^{(i)})_k)) ]\end{aligned}\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-95b682a68119805c7e43fb53a938c366_l3.png)

To avoid over-fitting, we use the cost function for neural networks with regularization

![Rendered by QuickLaTeX.com \[\begin{aligned}J(\theta) = \frac{1}{m} \sum_{i=1}^{m} \sum_{k=1}^K [-y_k^{(i)}\log(h_{\theta}(x^{(i)})k) \\ - (1-y_k^{(i)}) \log(1-(h{\theta}(x^{(i)})k)) ] \\+\frac{\lambda}{2m} [ \sum_{j=1}^{25} \sum_{k=1}^{625} (\Theta_{j,k}^{(1)})^2 + \sum_{j=1}^{2}\sum_{k=1}^{25} (\Theta_{j,k}^{(2)})^2 ]\end{aligned}\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-87cd4d2fa66827901a46599c732cec1b_l3.png)

When training neural networks, it is important to randomly initialize the parameters for symmetry breaking. One effective strategy for random initialization is to randomly select values for ![]() uniformly in the range

uniformly in the range ![]() . We use

. We use ![]() . This range of values ensures that the parameters are kept small and makes

. This range of values ensures that the parameters are kept small and makes

One effective strategy for choosing ![]() is to base it on the number of units in the network. A good choice of

is to base it on the number of units in the network. A good choice of ![]() is

is ![]() , where

, where ![]() and

and ![]() are the number of units in the layers adjacent to

are the number of units in the layers adjacent to ![]() .

.

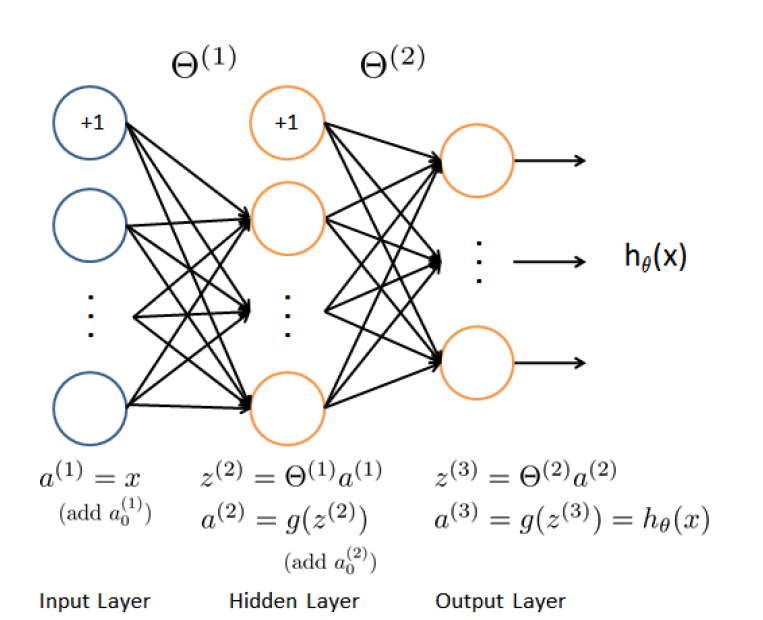

The error of the neural network is obtained by the backpropagation algorithm. The intuition behind the backpropagation algorithm is as follows. Given a training example ![]() , we will first run a “forward pass” to compute all the activations throughout the network, including the output value of the hypothesis

, we will first run a “forward pass” to compute all the activations throughout the network, including the output value of the hypothesis ![]() . Then, for each node

. Then, for each node ![]() in layer

in layer ![]() , we would like to compute

, we would like to compute

an error term ![]() that measures how much that node was responsible for any errors in our output.

that measures how much that node was responsible for any errors in our output.

For an output node, we can directly measure the difference between the network’s activation and the true target value, and use that to define ![]() (since layer 3 is the output layer). For the hidden units, we can compute

(since layer 3 is the output layer). For the hidden units, we can compute ![]() based on a weighted average of the error terms of the nodes in layer

based on a weighted average of the error terms of the nodes in layer ![]() .

.

Procedure

In detail, here is the backpropagation algorithm. We should implement steps 1 to 4 in a loop that processes one example at a time. Concretely, we should implement a for-loop for ![]() and place steps 1-4 below inside the for-loop, with the t-

and place steps 1-4 below inside the for-loop, with the t-![]() . Step 5 will divide the accumulated gradients by

. Step 5 will divide the accumulated gradients by ![]() to obtain the gradients for the neural network cost function.

to obtain the gradients for the neural network cost function.

- Set the input layer’s values

to the t-th training example

to the t-th training example  . Perform a feedforward pass (Figure ??), computing the activations

. Perform a feedforward pass (Figure ??), computing the activations  for layers 2 and 3. Note that we need to add

for layers 2 and 3. Note that we need to add  term to ensure that the vectors of activations for layers

term to ensure that the vectors of activations for layers  and

and  also include the bias unit.

also include the bias unit. - For each output unit

in layer 3 (the output layer), set

in layer 3 (the output layer), set

where![Rendered by QuickLaTeX.com \[\delta_k^{(3)} = (a^{(3)}_k - y_k)\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-fb4efba4a610f72a23ccf90bb06fe1aa_l3.png)

![Rendered by QuickLaTeX.com y_k \in [0,1]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-43d6aac4c83bdd80e54bd70d79563214_l3.png) indicates whether the current training example belongs to class k (

indicates whether the current training example belongs to class k ( ), or if it belongs to a different class (

), or if it belongs to a different class ( ).

). - For hidden layer

, set

, set ![Rendered by QuickLaTeX.com \[\delta^{(2)} = (\Theta^{(2)})^T \delta^{(3)} .* g'(z^{(2)}).\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-e6ef7efae285319718b9a8889a7bc704_l3.png)

- Accumulate the gradient from this example using the following formula. Note that we should skip or remove

.

.![Rendered by QuickLaTeX.com \[\Delta^{(l)} = \Delta^{(l)} + \delta^{(l)}(a^{(l)})^T\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-51346ce717c9db9993388fc414e8052b_l3.png)

- Obtain the (unregularized) gradient for the neural network cost function by dividing the accumulated gradients by

:

:![Rendered by QuickLaTeX.com \[\frac{\partial}{\partial \Theta_{ij}^{(l)}} J(\Theta) = D_{ij}^{(l)} = \frac{1}{m} \Delta_{ij}^{(l)}\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-6e0480d9edba9d5f80d0f7a48d671f00_l3.png)

To account for regularization, it turns out that we can add this as an additional term after computing the gradients using backpropagation. Specifically, after we have computed ![]() using backpropagation, we should add regularization using

using backpropagation, we should add regularization using

![Rendered by QuickLaTeX.com \[\frac{\partial}{\partial \Theta_{ij}^{(l)}} J(\Theta) = D_{ij}^{(l)} = \frac{1}{m} \Delta_{ij}^{(l)},\quad \mbox{for} j = 0.\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-2fb2888b89372f6280caa7ad74f070dc_l3.png)

![Rendered by QuickLaTeX.com \[\frac{\partial}{\partial \Theta_{ij}^{(l)}} J(\Theta) = D_{ij}^{(l)} = \frac{1}{m} \Delta_{ij}^{(l)} + \frac{\lambda}{m}\Theta_{ij}^{(l)}, \quad \mbox{for} j \geq 1.\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-cad92adf13817f57bc07177bc5b365de_l3.png)

After we have successfully implemented the neural network cost function and gradient computation by feedforward propagation and backpropagation, the next step will be learning a good set of parameters by minimizing the cost function. Since the cost function is not convex, there is no guarantee that we can always find the global minimum. But we should try to increase the number of iterations in our minimizer(say gradient descent, or conjugate descent). And perform the solver several times since the initialization is random, and different initialization may

![Rendered by QuickLaTeX.com \[\begin{aligned}\mbox{Var}(\Delta S-h\Delta F) &= \mathbf{E}[(\Delta S-\overline{\Delta S}-h(\Delta F-\overline{\Delta F}))^2] \\&= \mathbf{E}[(\Delta S-\overline{\Delta S})^2] + h^2\mathbf{E}[(\Delta F-\overline{\Delta F})^2] \\ & \quad - 2h\mathbf{E}[(\Delta S-\overline{\Delta S})(\Delta F-\overline{\Delta F})] \\&= \sigma_{\Delta S}^2+ h^2\sigma_{\Delta F}^2 - 2h~\mbox{cov}(\Delta S,\Delta F) ,\end{aligned}\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-de2ed3dccaefcf8f4df0dcd0915dd7ad_l3.png)

![Rendered by QuickLaTeX.com \[B = \sum_{i=1}^n c_ie^{-yt_i}\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-5f47b218dc5d620d93b0ea28ef9bfc86_l3.png)

![Rendered by QuickLaTeX.com \[\Delta B = -\sum_{i=1}^n c_it_ie^{-yt_i} \Delta y.\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-ccc7ee64fba447b2ff1c04ee4888f2fc_l3.png)

![Rendered by QuickLaTeX.com \[D := \sum_{i=1}^n t_i\left[\frac{c_ie^{-yt_i}}{B}\right]\]](https://sisitang0.com/wp-content/ql-cache/quicklatex.com-ecc44266db255d4cb94b8413e458aee2_l3.png)